Is this excessive in your opinion?

Yes and No.

From a functional standpoint, yes, I think it is overkill. Repeating the observation a few hours later will improve precision, but repeating back-to-back probably won't have any practical benefits over simply running a single session that spans the same duration. And, as I noted before, with the firmware/constellations, sessions longer than 60 seconds appear to offer diminishing returns.

But there are other considerations. Matt Sibole has shown that with our software, adding multiple sessions in cluster average will reduce the error estimates of the point dramatically. This can be useful in demonstrating some prescribed positional tolerance requirement (e.g. ALTA/NSPS Land Title Survey requirements). I don't know that the resulting point is actually any more precise, but it reports to be more precise.

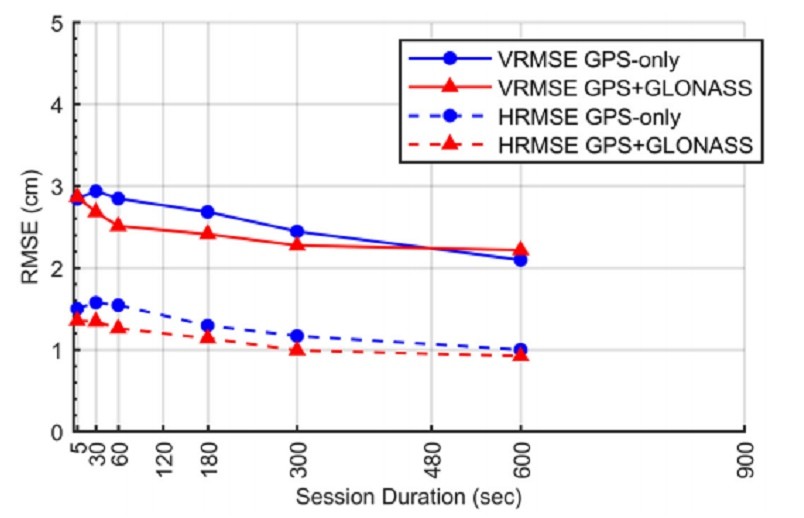

One thing that we seldom discuss is the vertical slope during collection. Javad added this a long time ago and the theory behind it is solid, but I don't think anyone really uses it. The idea is that you want to stay long enough for at least a full cycle of the sine wave that appears in the vertical scatter plot during collection. Please forgive me for not having any context for the following slide. I'm taking it on the word of the person who posted it on Surveyor Connect that it is a slide from an NGS presentation.

Notice that for their tests, 30 seconds actually provided poorer statistics than 5 seconds. At 60 seconds, it comes back to being on par with 5 seconds and then improves from there. I could be wrong, but I suspect this is because the first 30 seconds is usually biased in one part of that sine wave (think of only having epochs in the peak or the trough) and that the additional time provides epochs in the rest of the phase. Including epochs on both sides of the phase of the wave will result in a mean slope of 0. You need enough epochs to make sure the sample size actually includes the top and bottom of the wave and that you are just catching the top of the wave (which would appear to be a 0 slope line on graph) or the bottom of the wave (which would also appear to be a 0 slope line on the graph). So a very short data set may look horizontal, but in actuality is just not long enough to provide the shape of the sine wave. This may also be why in the above chart that the vertical precision at 180 is above the straight line between 60 and 300. Clearly 180 is better than 60 in this graph, but you would expect it to look more like the shape of the horizontal RMSE, where it falls below the straight line between 60 and 300. Perhaps at 180, the cycles are such that there have been several cycles and then a fractional part of an additional cycle, still biasing the solution a bit, but not as much since one or more full cycles are already part of the average.

This is mostly guesswork on my part, but I can say that the user will probably do well to pay attention to the scatter plot of the vertical plot to see when to stop observing.

Also note that this chart is using GPS and Glonass. I believe the reason we're seeing such great precision with shorter observations is because of the addition of Galileo and Beidou, particularly Beidou, which seems to be providing the most precision of any of the four systems used at present.