Tyler

Member

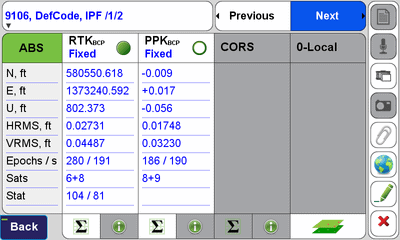

I was curious if anyone can think of good ideas for a Senior Project regarding use of RTK Surveying, more specifically with the use of a Triumph LS and T1M Base. The project would need to contain a field portion and an analysis portion. I am interested in relating this project to accuracies in boundary retracement but I would like to hear any and all other ideas. The LS and base unit that I currently have access to can access Beidou and Galileo satellites which open up some possibilities for ideas. Thanks!